google bert tutorial

All the tsv files should be in a folder called data in the bert directory. Bert new march 11th 2020. Nlp handles things like text responses figuring out the meaning of words within context and holding conversations with us.

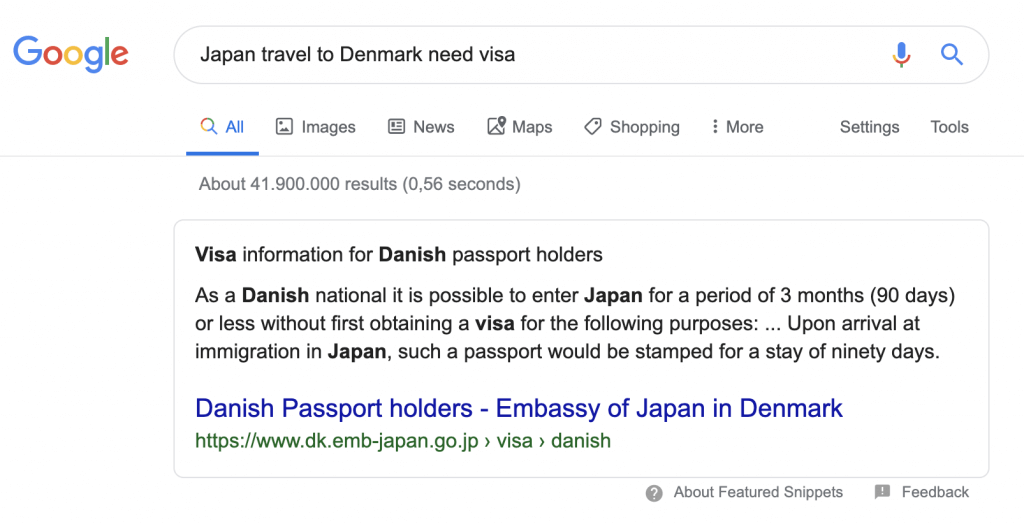

It helps computers understand the human language.

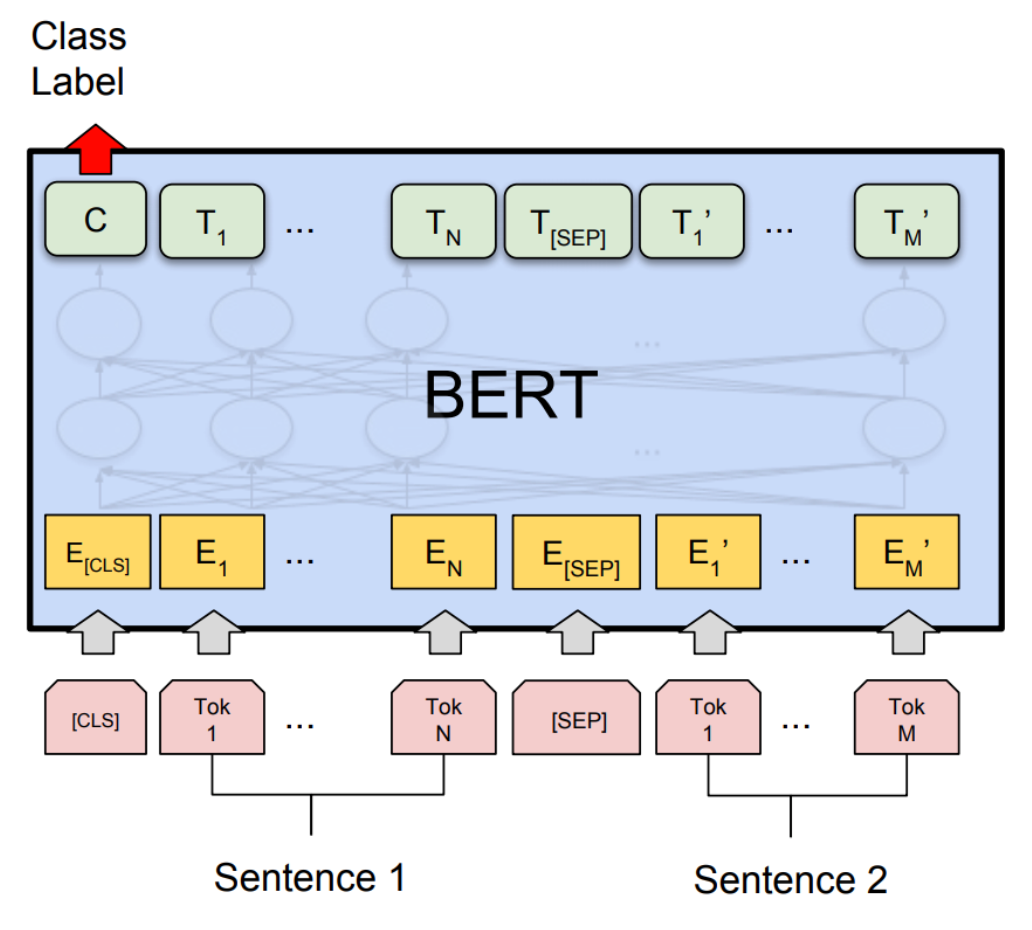

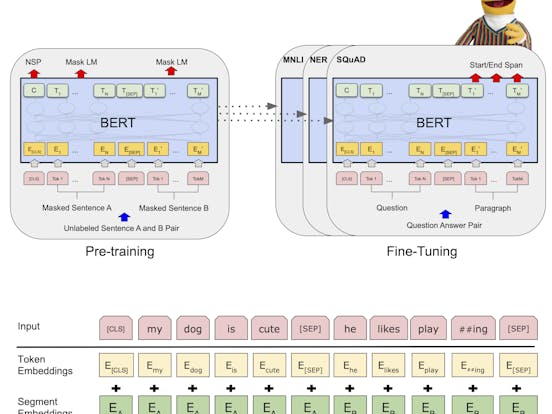

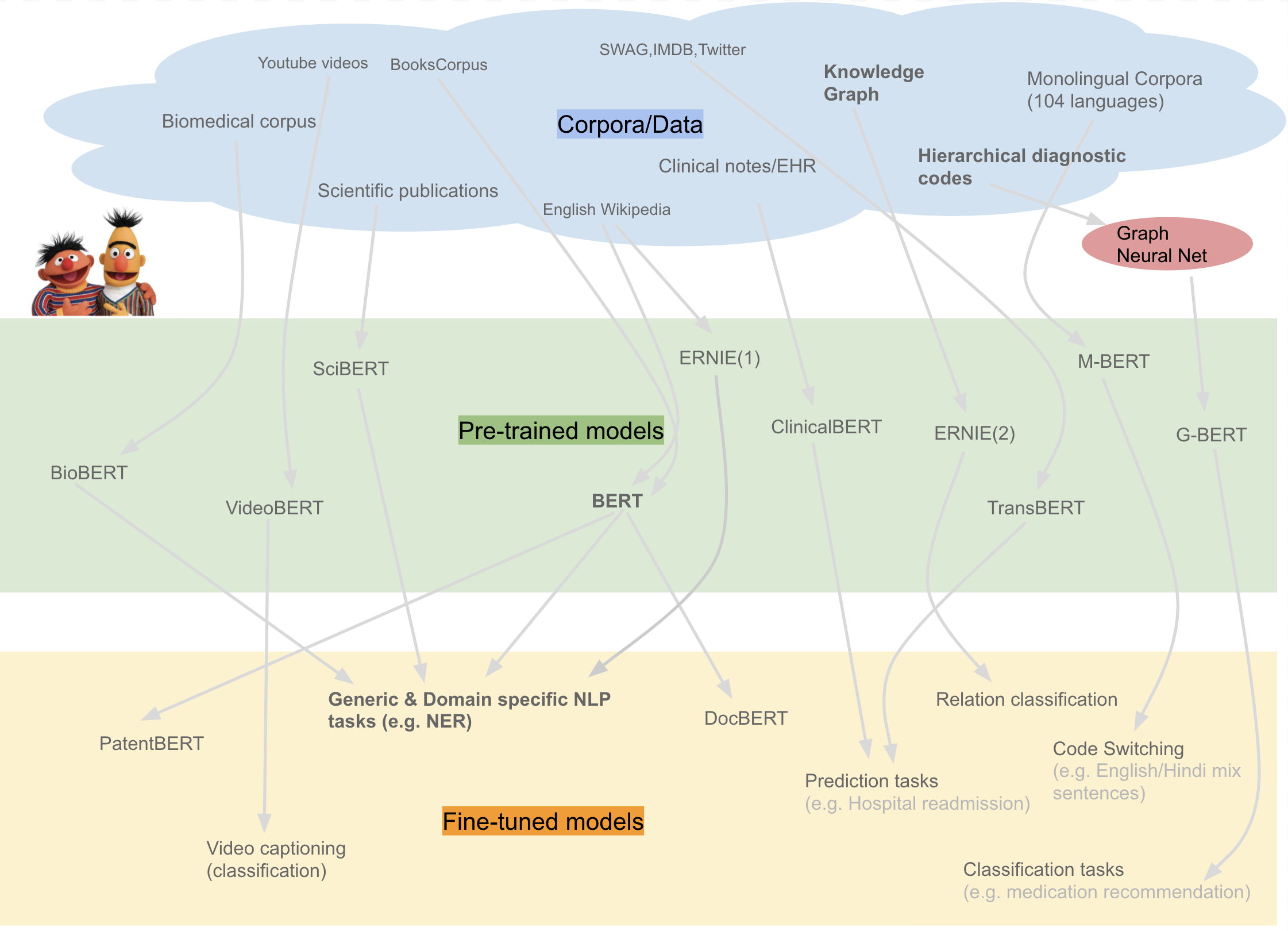

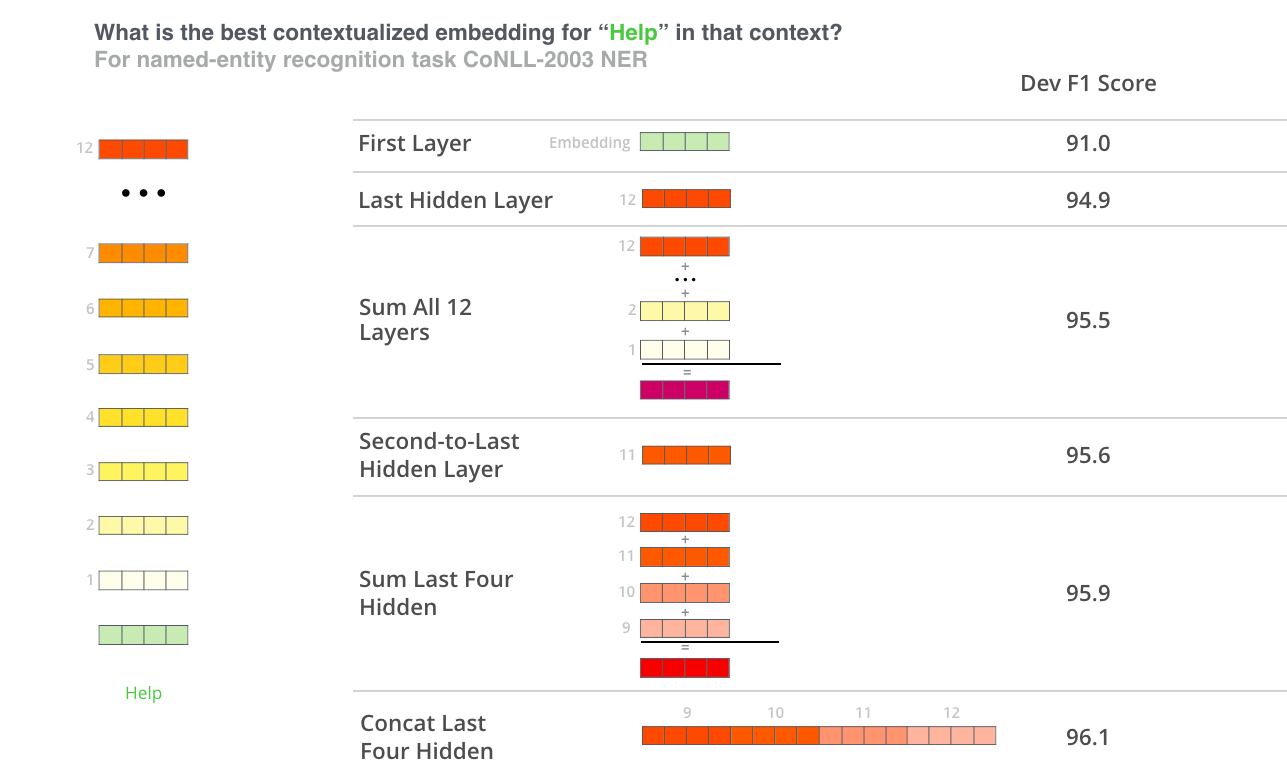

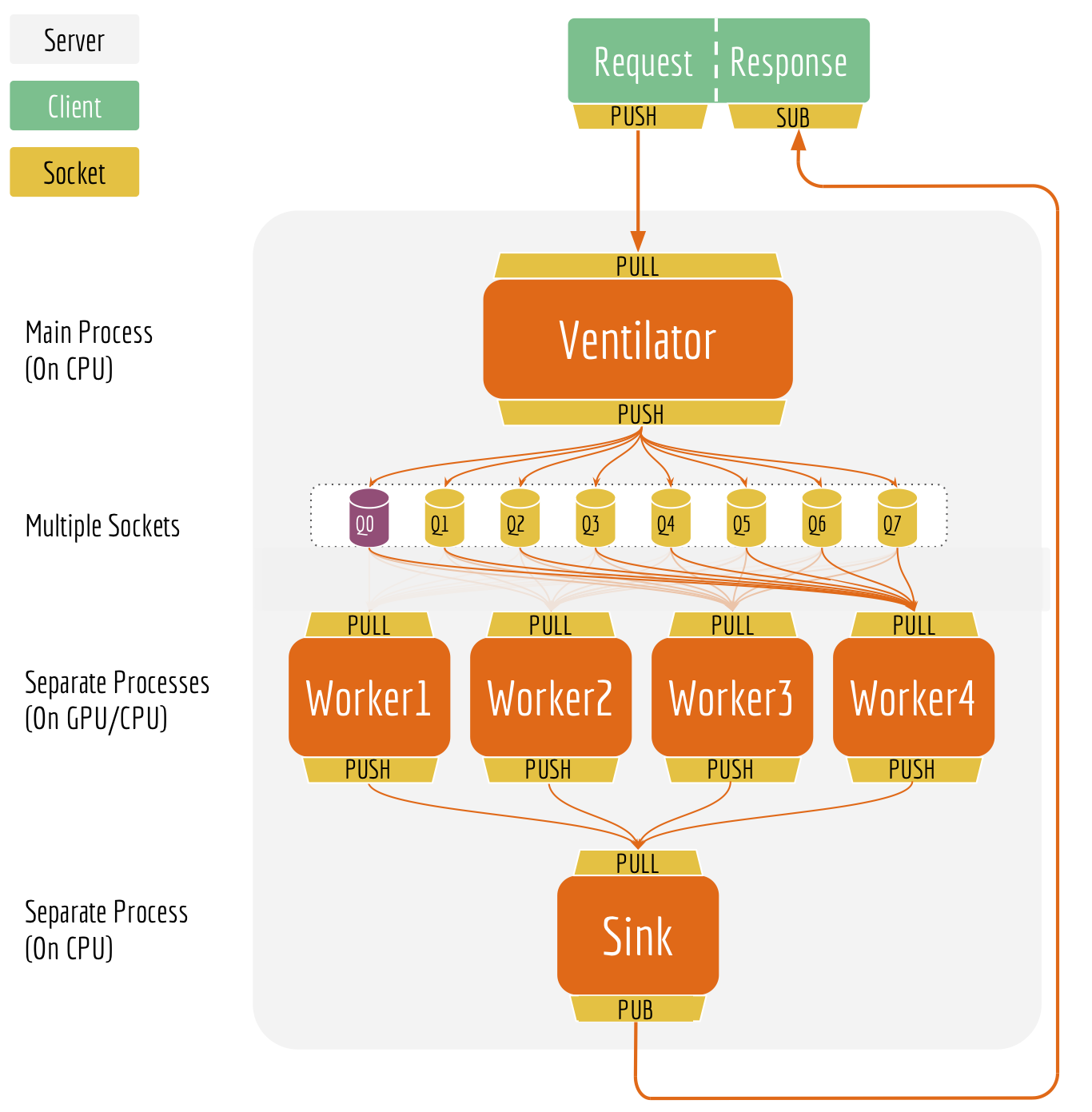

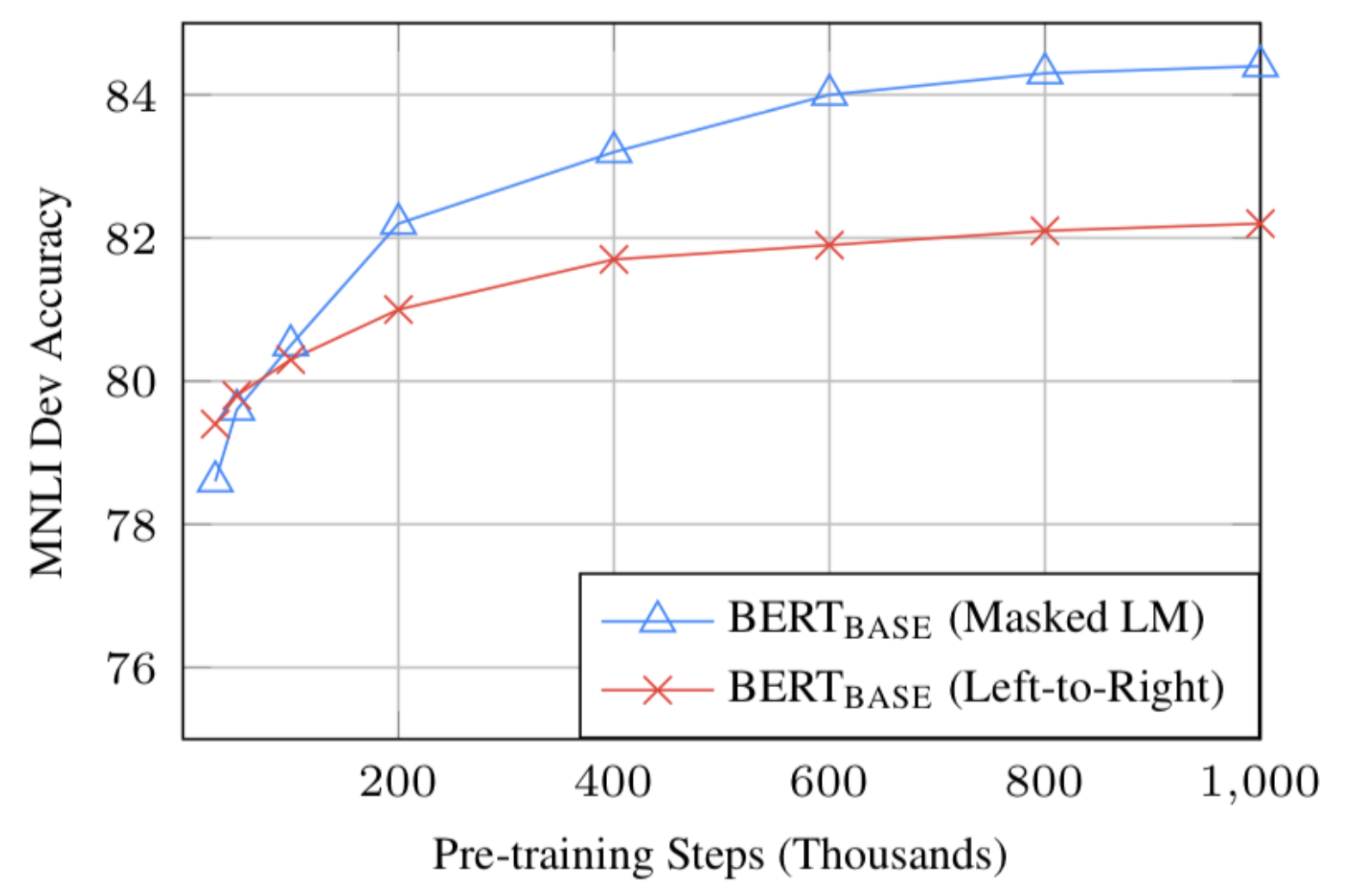

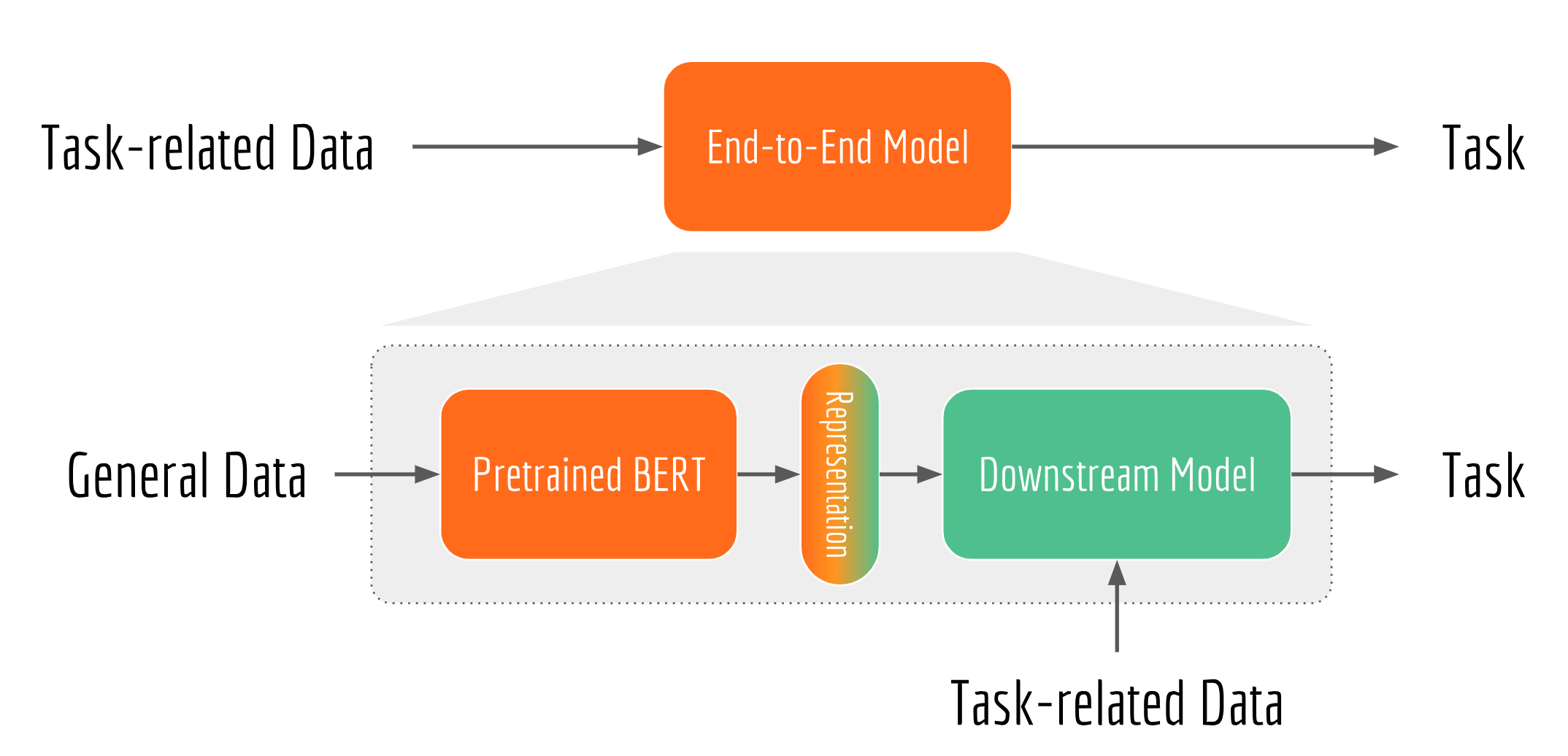

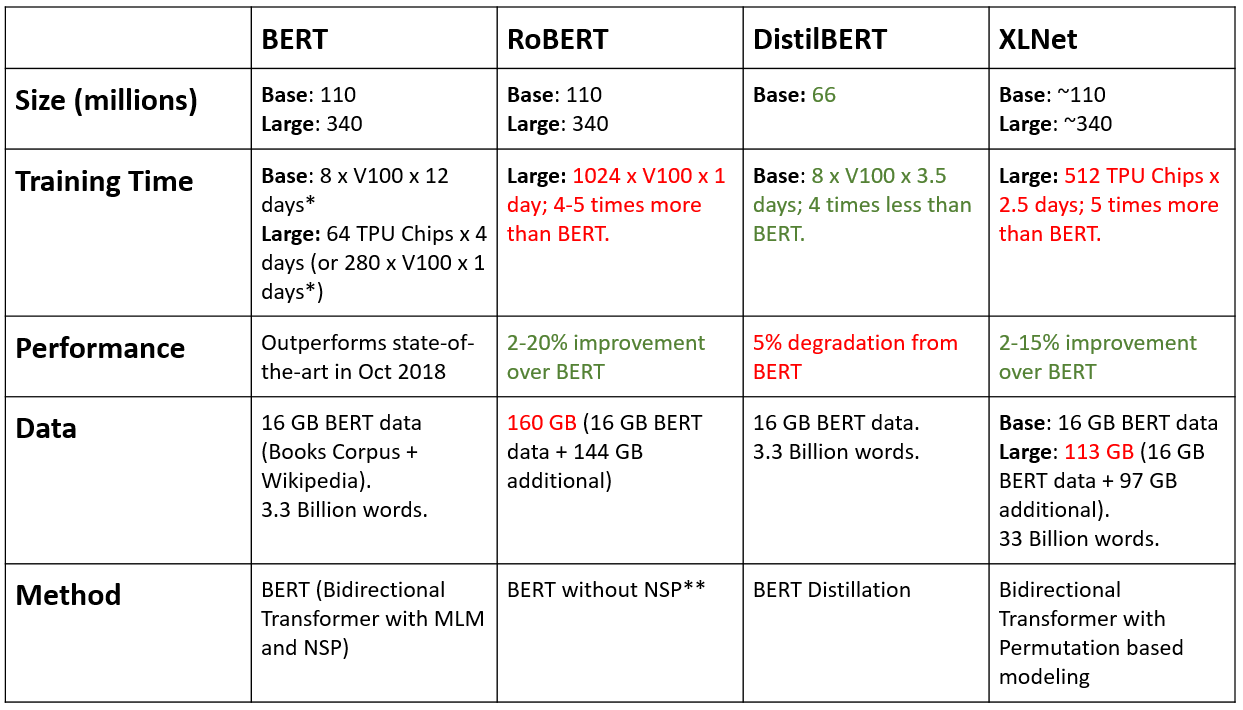

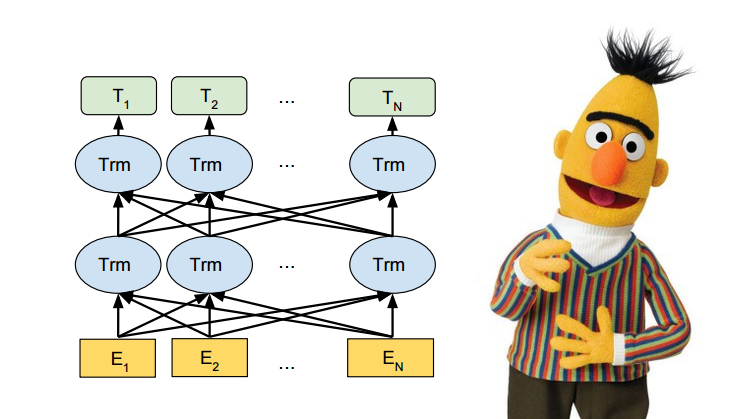

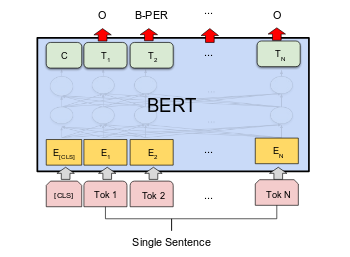

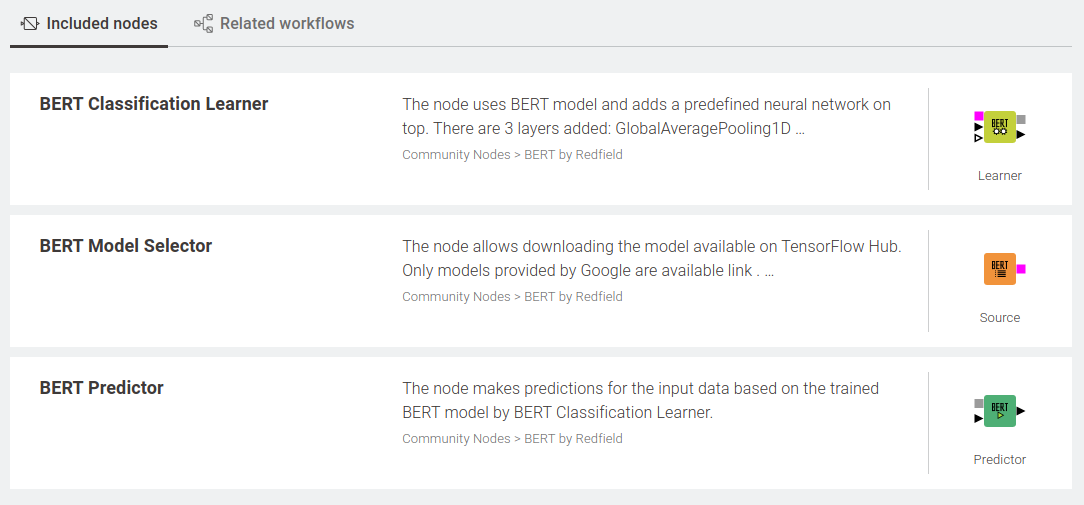

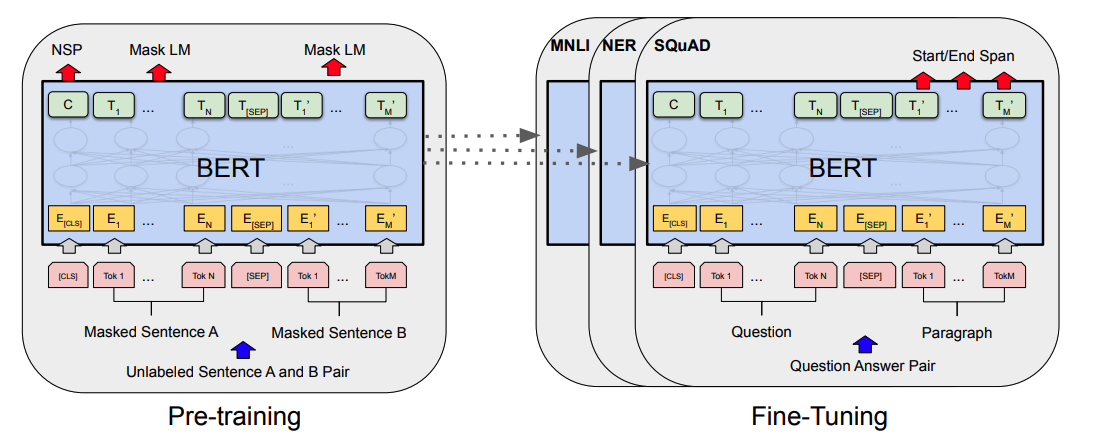

Google bert tutorial. We ll explain the bert model in detail in a later tutorial but this is the pre trained model released by google that ran for many many hours on wikipedia and book corpus a dataset containing 10 000 books of different genres this model is responsible with a little modification for beating nlp benchmarks across. We should have created a folder bert output where the fine tuned model will be saved. We have shown that the standard bert recipe including model architecture and training objective is effective on a wide range of model. Bert based named entity recognition ner tutorial and demo last updated on.

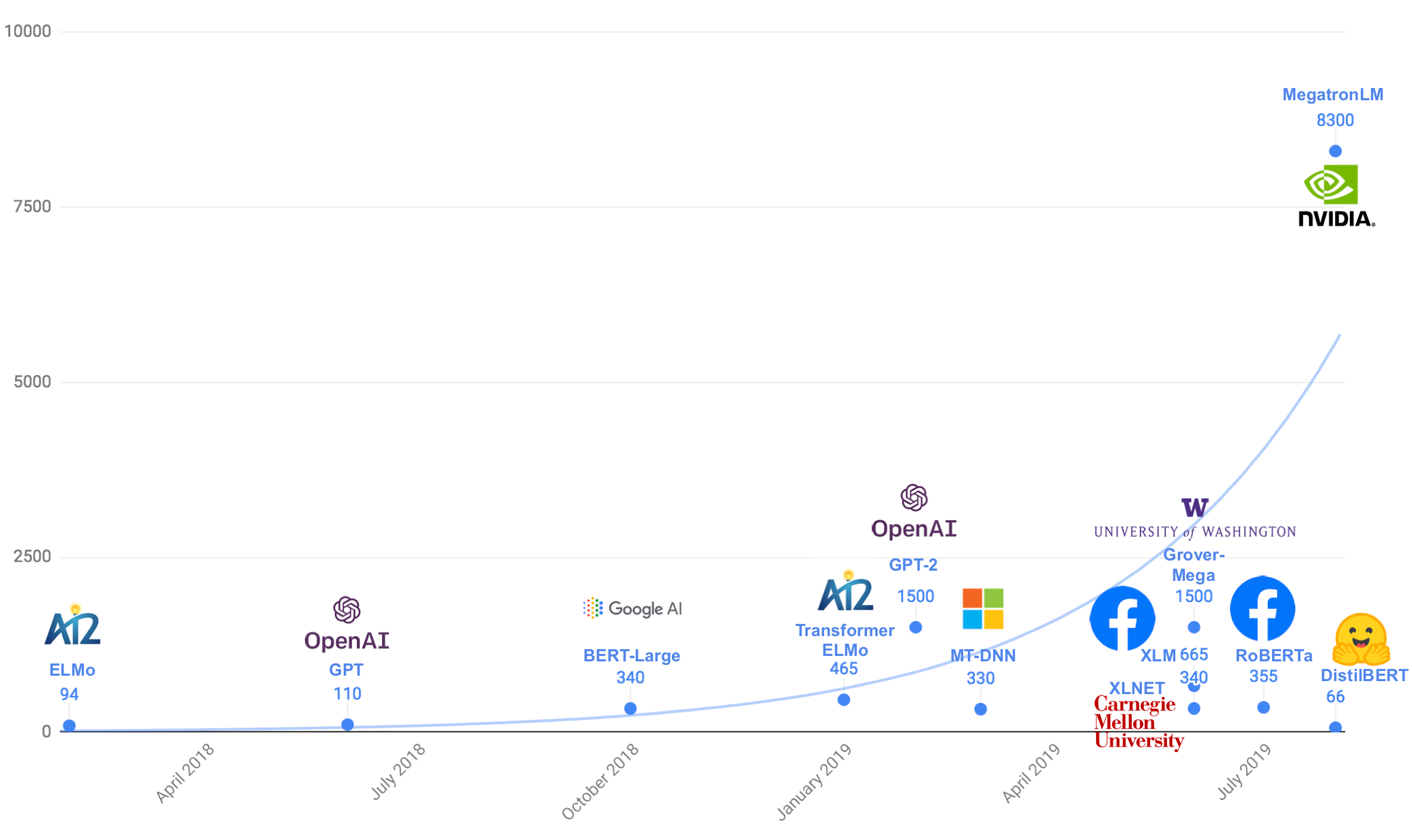

Today we re excited to share that bert is. One of the most biggest milestones in the evolution of nlp recently is the release of google s bert which is described as the beginning of a new era in nlp. On the importance of pre training compact models. March 12 2020 october 9 2020 0 comments exploring more capabilities of google s pre trained model bert github we are diving in to check how good it is to find entities from the sentence.

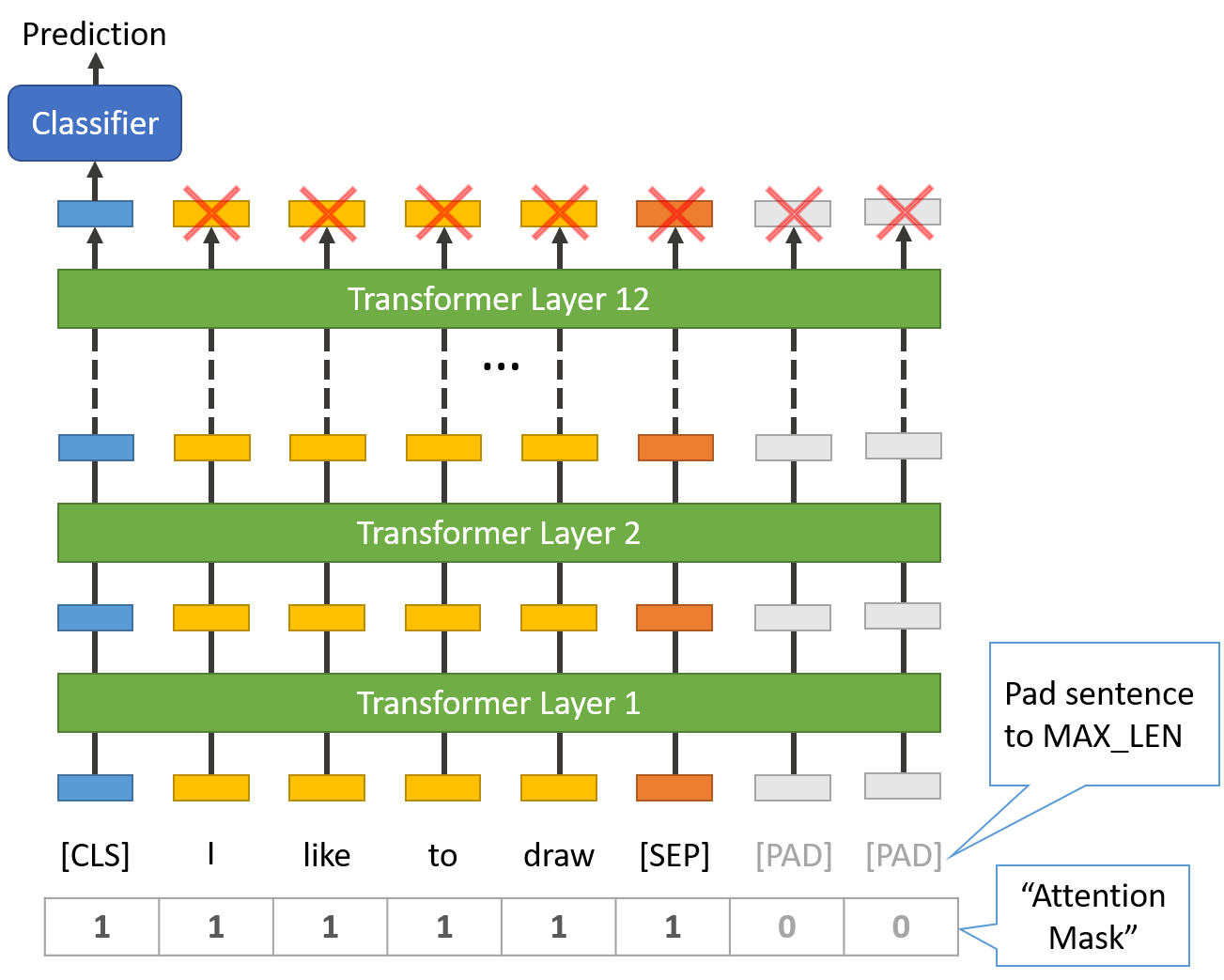

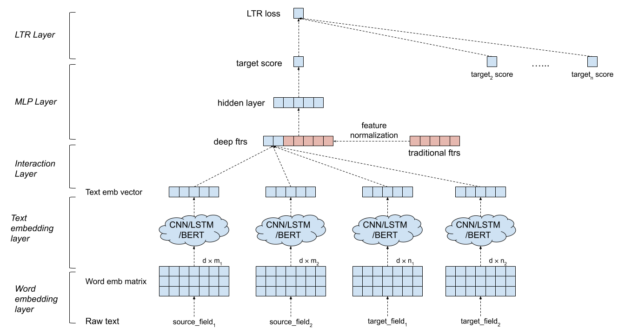

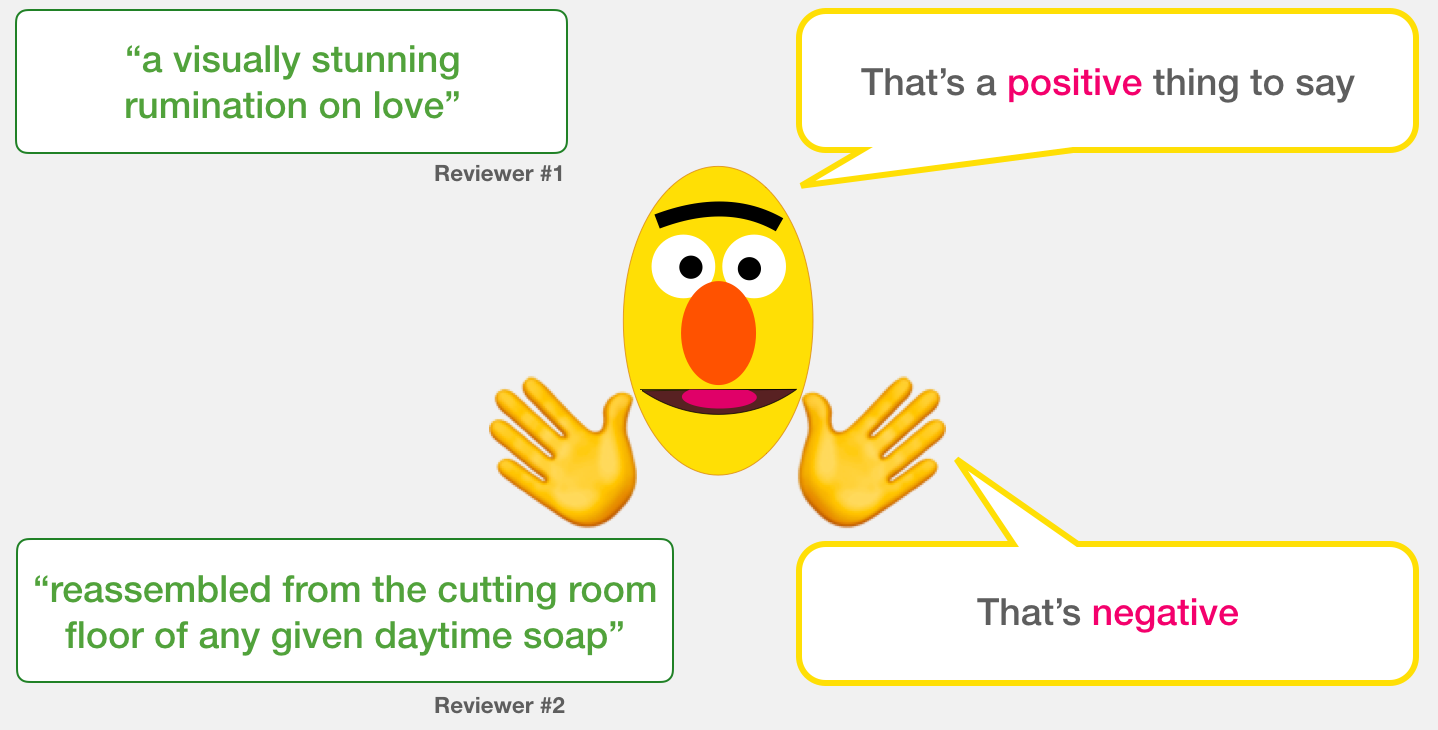

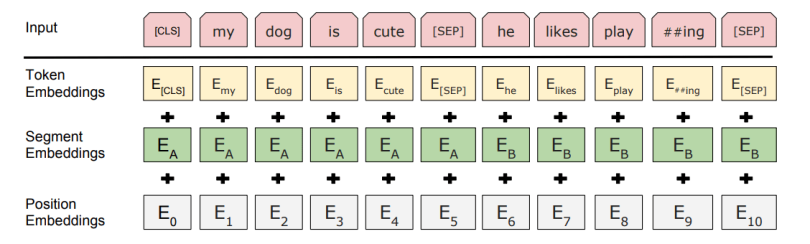

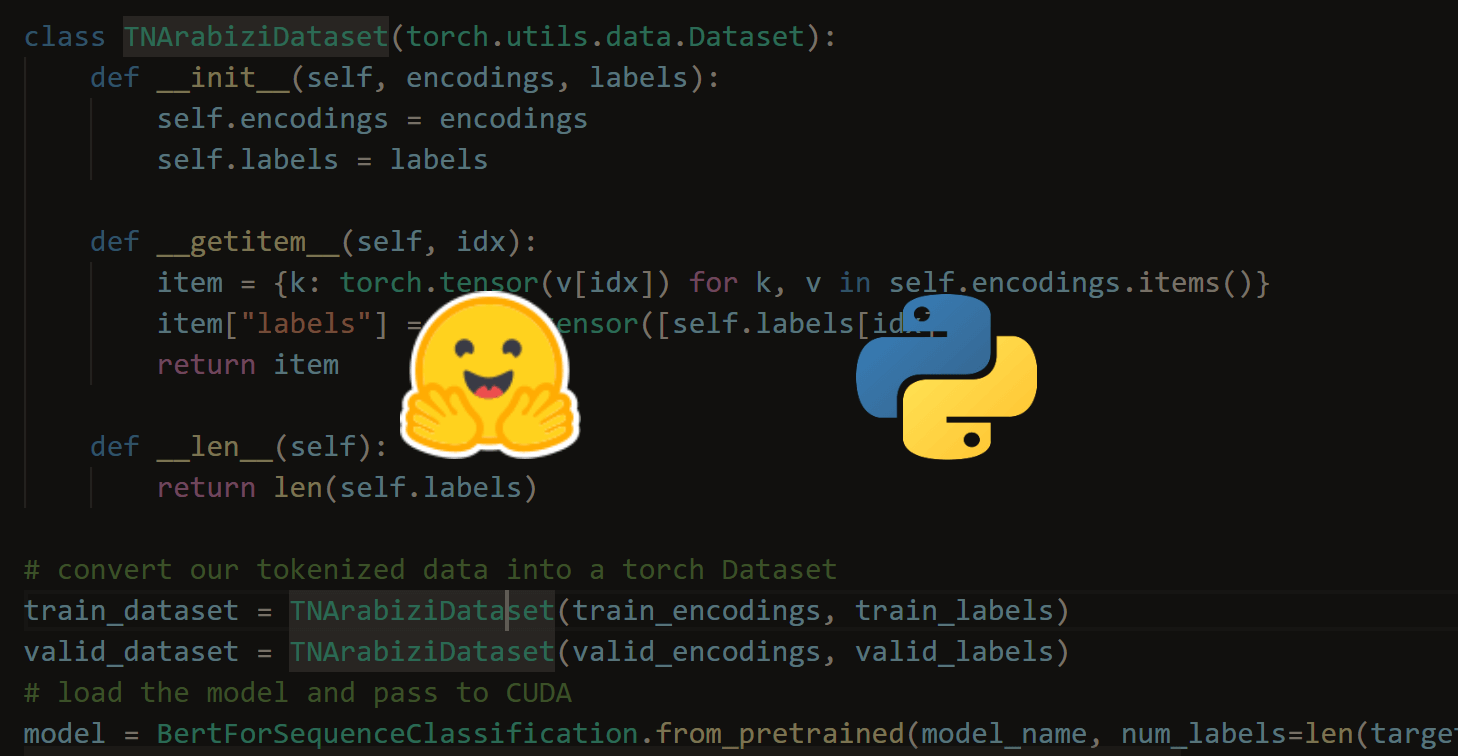

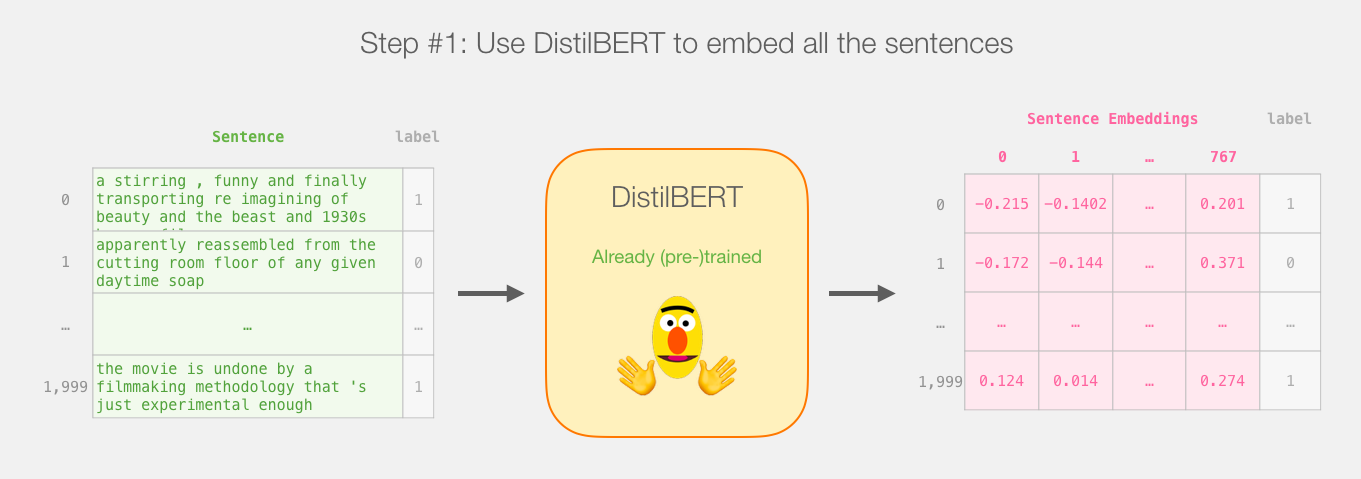

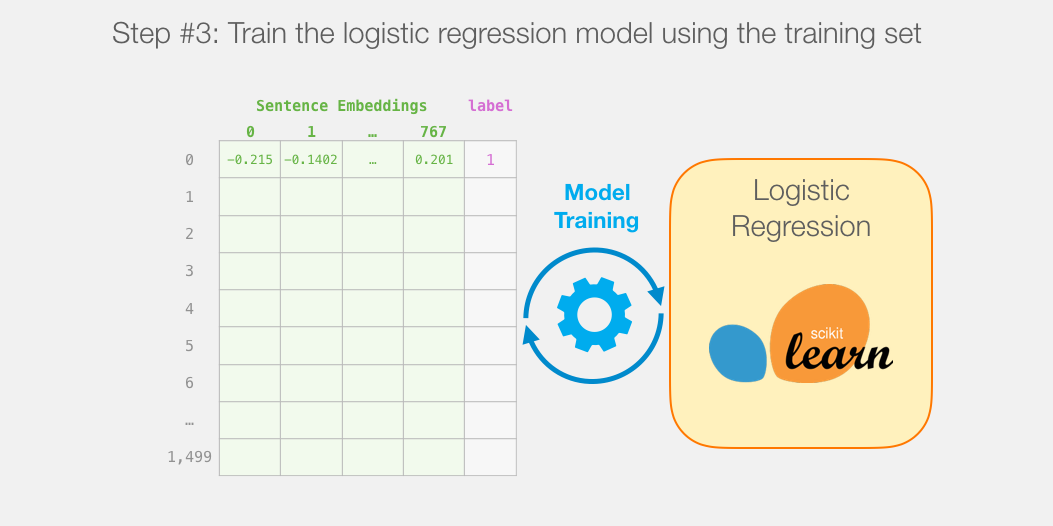

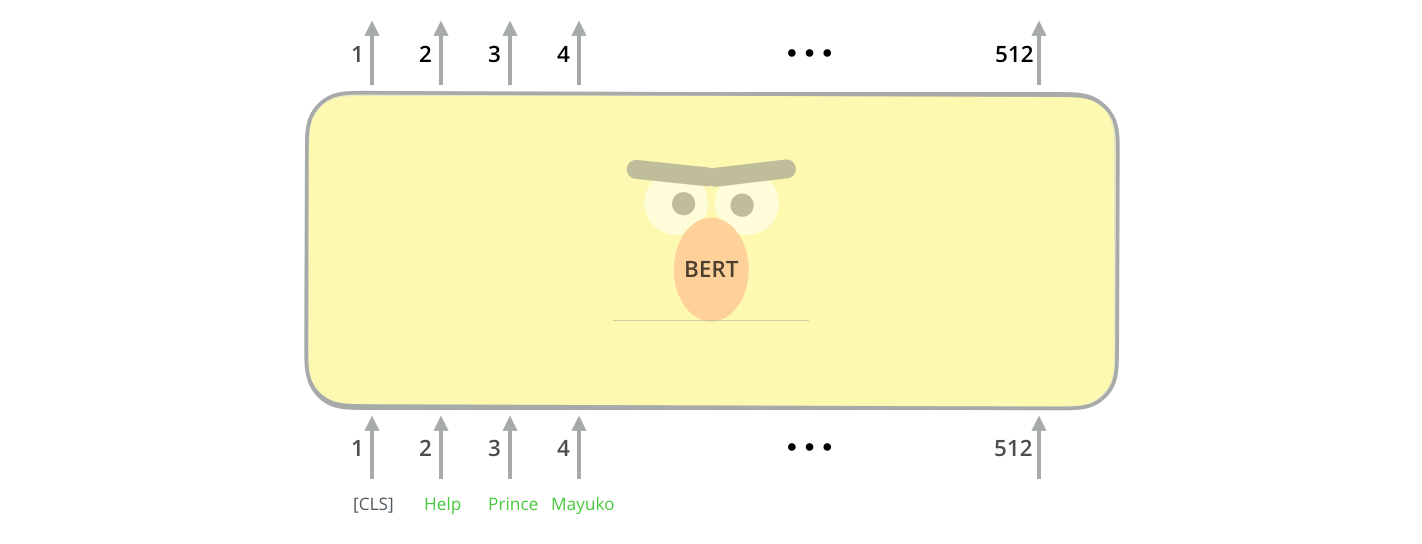

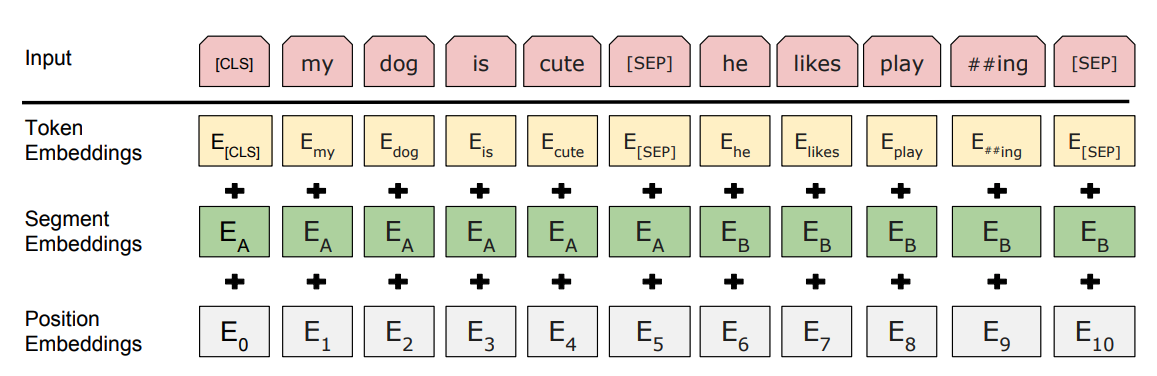

Load the imdb dataset load a bert model. Now let s import pytorch the pretrained bert model and a bert tokenizer. In addition to training a model you will learn how to preprocess text into an appropriate format. This tutorial describes how to use the google apis client library for python to call the ai platform training rest apis in your python applications.

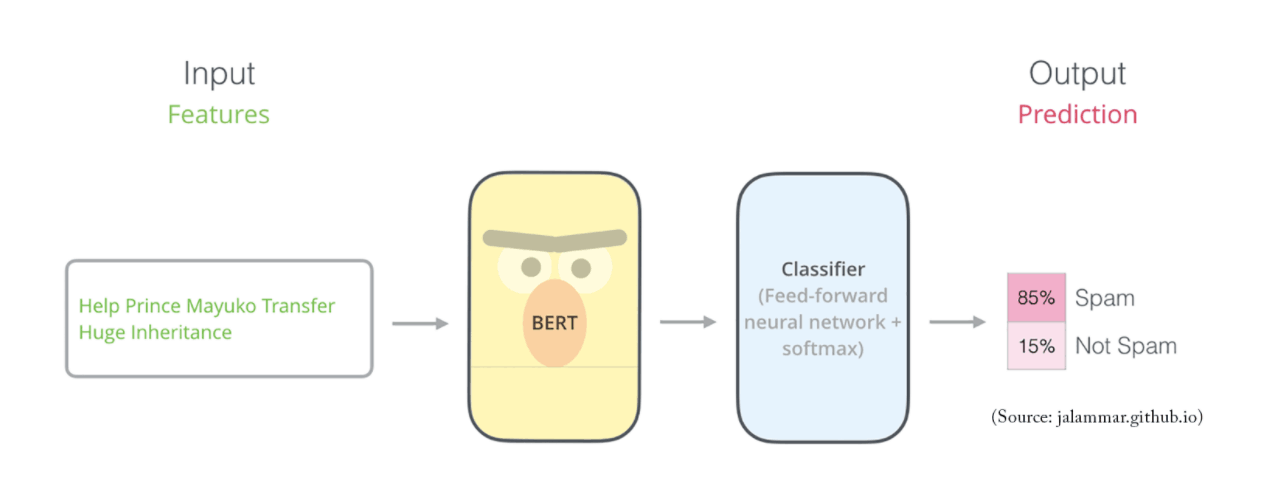

The pre trained bert model should have been saved in the bert directory. In this notebook you will. In this notebook i ll use the huggingface s transformers library to fine tune pretrained bert model for a classification task. Google bert nlp machine learning tutorial.

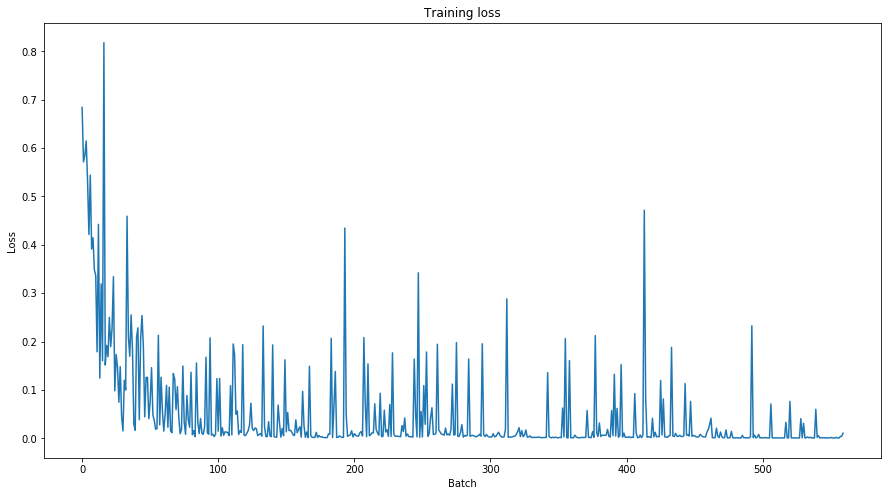

This tutorial contains complete code to fine tune bert to perform sentiment analysis on a dataset of plain text imdb movie reviews. Training model using pre trained bert model. Smaller bert models this is a release of 24 smaller bert models english only uncased trained with wordpiece masking referenced in well read students learn better. The code snippets and examples in the rest of this documentation use this python client library.

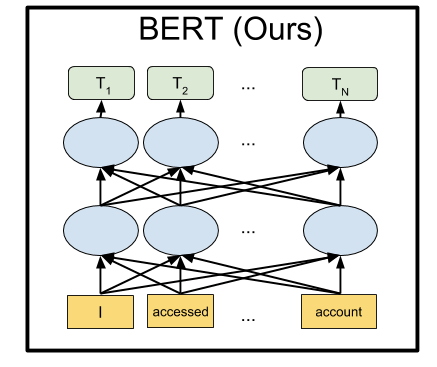

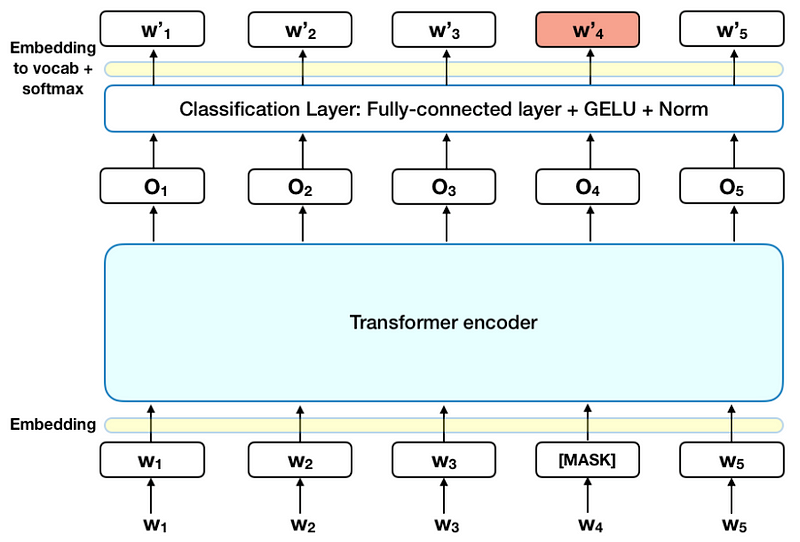

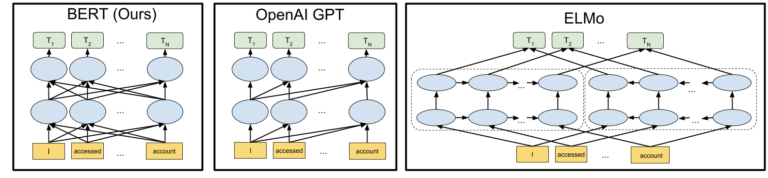

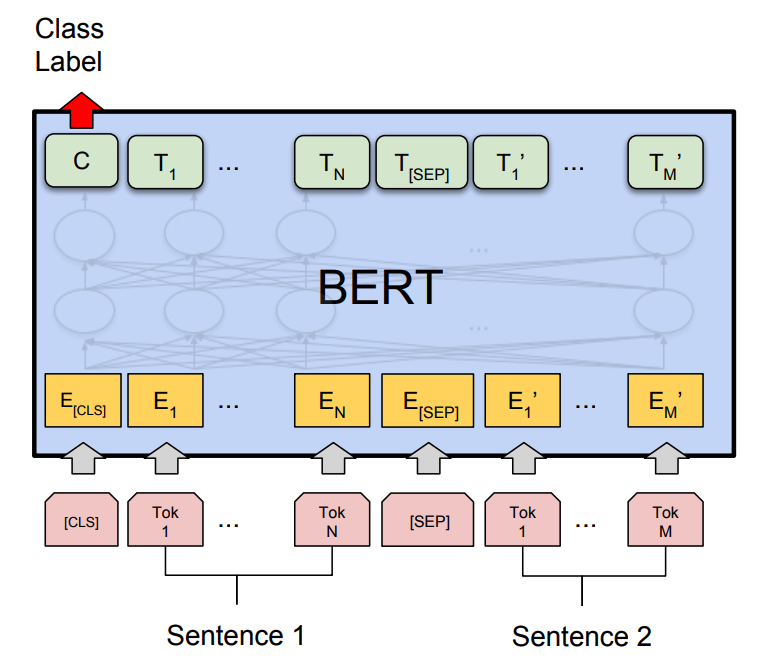

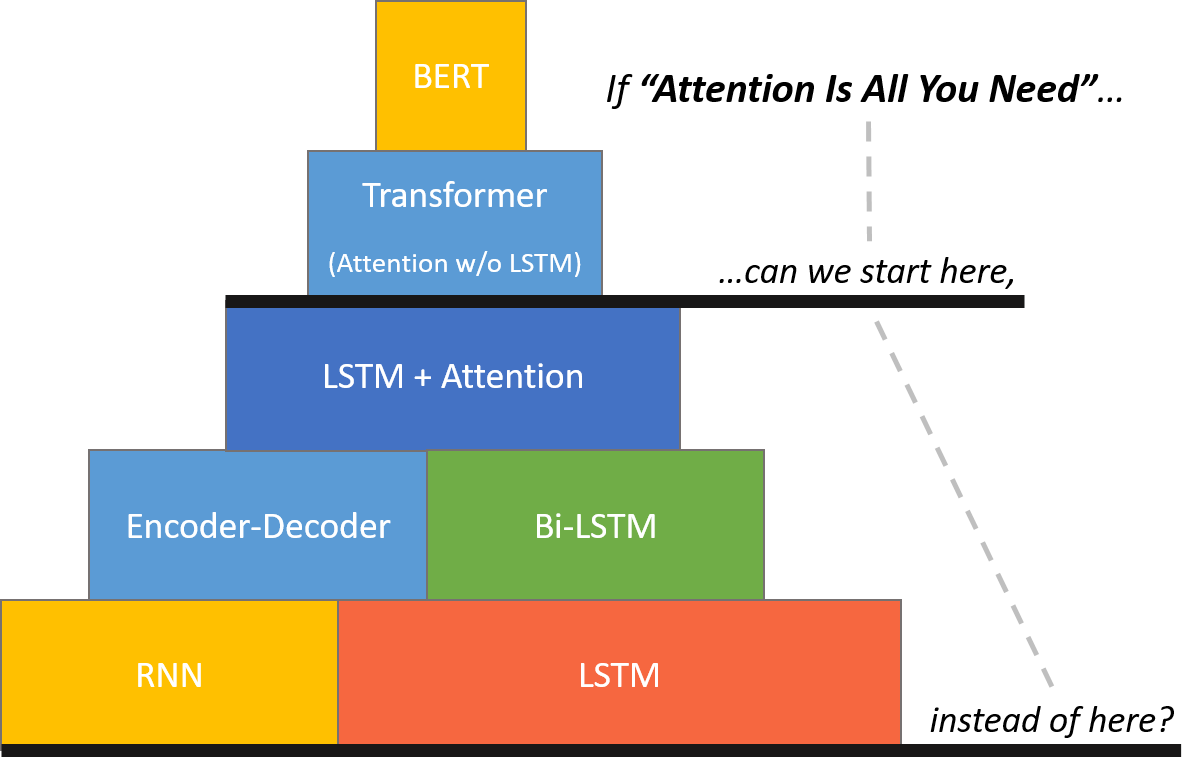

You will be creating a model in your google cloud project in this tutorial. There are plenty of applications for machine learning and one of those is natural language processing or nlp. We ve invested deeply in language understanding research and last year we introduced how bert language understanding systems are helping to deliver more relevant results in google search. Bert bidirectional encoder representations from transformers released in late 2018 is the model we will use in this tutorial to provide readers with a better understanding of and practical guidance for using transfer learning models in nlp.

Then i will compare the bert s performance with a baseline.